You can do this in the ViewDidLoad method. The next step is to get authorization from the user for the speech recognizer. Private SFSpeechRecognizer speechRecognizer = new SFSpeechRecognizer(new NSLocale("en_US")) Private AVAudioEngine audioEngine = new AVAudioEngine() Private SFSpeechRecognitionTask recognitionTask Private SFSpeechAudioBufferRecognitionRequest recognitionRequest The SFSpeechRecognizer can be passed a locale for determining the correct language and region for the speech recognition. These objects pertain to using the Speech API or (in the case of the AVAudioEngine) the built-in microphone.

We'll create some variables at the top of our class which we'll use throughout the example. I've modified the sample slightly by adding a button to turn the mic on and off when the uses wants to begin and end voice input. Rather than start from scratch, we'll be modifying the FullTextFilter sample to demonstrate the Speech API working with FlexGrid. We'll need to request authorization from the user to use a speech recognizer, create a speech recognition request, and then pass the request to our speech recognizer. There's a number of different steps to follow while implementing speech recognition in iOS 10. Our example will use live audio transcription which is why we will also need to add the second permission for the microphone. You can add these permissions directly into your ist by adding the NSSpeechRecognitionUageDescription (for speech recognition) and NSMicrophoneUsageDescription (for accessing the microphone). Since the Speech API transmits data back and forth from Apple's server, it's important that you both are transparent about the process to your users and add the correct permissions into your app. Setting up permission for Speech Recognition Using the Speech API requires that you target iOS 10.

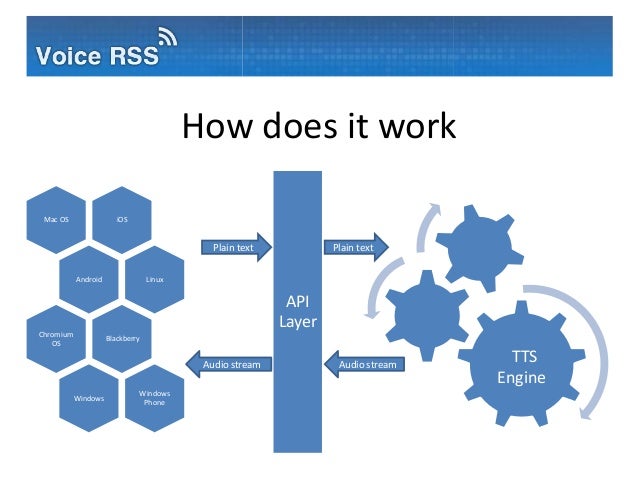

Both Apple and Xamarin have some documentation related to the Speech API, how it works, and how to implement it. The speech API generally relies on Apple's servers for processing speech, and will return a result back to you (some newer iOS devices are able to do this locally for certain languages). The API works with both live and prerecorded speech, and gives you transcriptions, multiple interpretations, and confidence levels for the results it provides. The new Speech API is a powerful to add speech recognition into your app. In this article we'll examine how you can integrate speech recognition into your Xamarin.iOS application by guiding you through creating a speech driven FlexGrid with the ability to filter based on your spoken phrase. Xamarin has ported all of these new APIs to their platform giving you the ability to try these new features in your Xamarin.iOS apps. The Speech API especially provides a compelling way to incorporate speech recognition into your application. Apple has introduced a few interesting new features in the iOS 10 for working with speech including the new Speech API and SiriKit.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed